Seeing Machines Think: On Observability for AI Agents

There’s an old thought experiment in philosophy of mind called the “other minds” problem: how do you know that other beings have inner experiences? You can observe their behavior, listen to their speech, watch their reactions — but you can never directly access what’s happening inside. You infer it. You build a model of someone else’s mind from the traces they leave behind.

We now have this exact problem with software.

AI agents — the coding assistants, the autonomous sub-agents, the tool-calling systems that refactor your code while you’re getting coffee — are the first software systems where the developer genuinely doesn’t know what happened between input and output. Traditional programs are deterministic; you can trace the call stack. Traditional services are observable; you can read the logs. But an agent that spawns three sub-agents, makes fourteen tool calls, reads nine files, writes four, shells out twice, and then tells you “Done” — that agent has an inner life you cannot see.

This is the problem Project Telescope was built to solve.

The Legibility Gap

There’s a concept in urban planning called “legibility” — the degree to which a city’s structure can be understood by the people navigating it. A legible city has clear landmarks, consistent patterns, readable signs. An illegible city works fine for locals but bewilders newcomers. You can use it, but you can’t reason about it.

Agent-assisted development has a legibility problem. The agents work. They often work well. But their behavior is illegible. When a Copilot session goes sideways, there is no stack trace. When Claude Code burns through tokens on a loop, there is no flame graph. When a sub-agent rewrites a config file it shouldn’t have touched, there is no audit trail.

The usual developer instinct is to reach for logs. But agent logs — when they exist at all — are raw, unstructured, agent-specific, and scattered across different directories in different formats. Copilot writes JSONL to one path; Claude Code writes JSONL to another. There is no common schema. There is no unified view. There is no way to ask the simplest possible question: what did my agents do today?

This gap matters more as agents become more autonomous. The less you supervise, the more you need to be able to audit. The less you type, the more you need to be able to trace. Trust without verification isn’t trust — it’s hope.

Telescope

Telescope is a local-first observability platform for AI agents. Think of it less like a logging framework and more like a perceptual system — a way of making agent behavior available to human cognition.

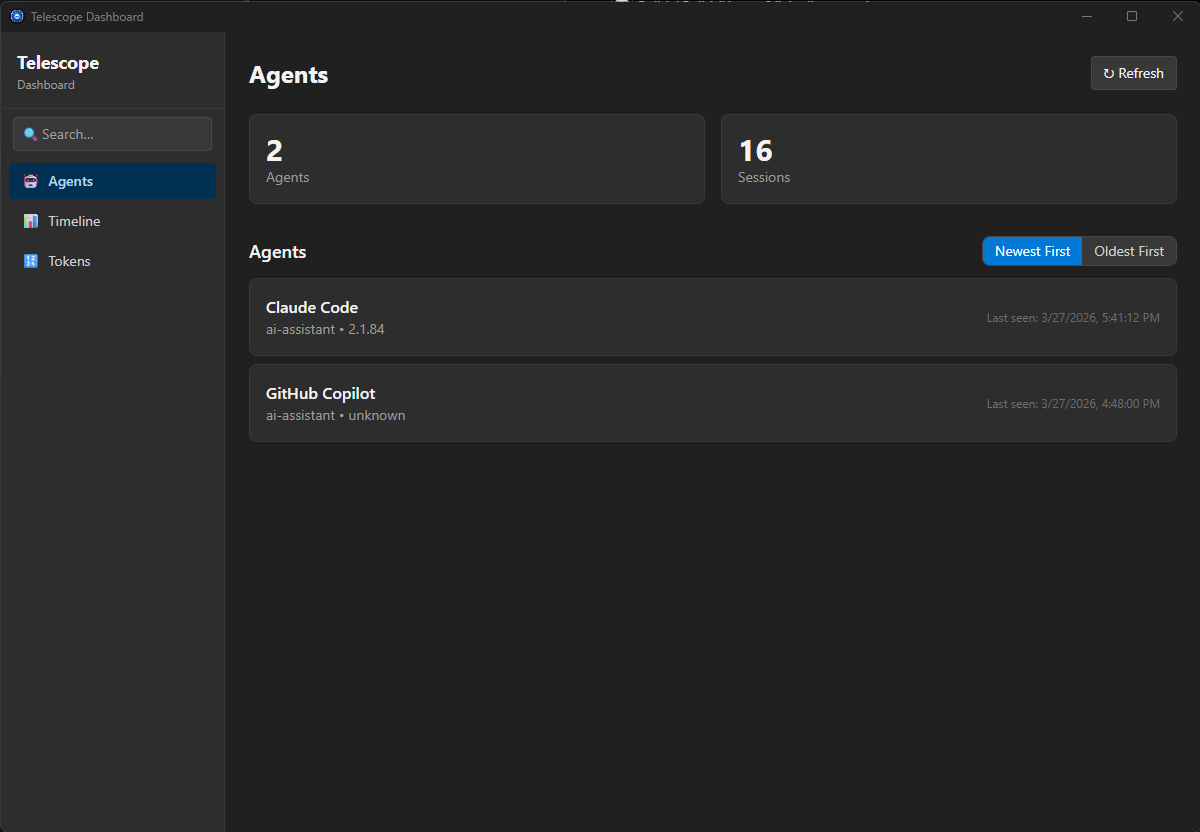

It runs entirely on your machine. No cloud accounts, no API keys, no network egress. Everything lives in ~/.telescope/ as SQLite databases. You install it, start the service, and it begins watching your agents — GitHub Copilot, Claude Code, any MCP-compatible agent — and giving you a structured, queryable view of what they’re doing.

tele service start # start the service + collectors

tele agents list # what agents are active?

tele sessions list # what sessions have they had?

tele watch # live stream of agent activity

tele dashboard # visual explorer

What You Get

The core promise is simple: you see everything your agents did, across all agents, in one place.

The CLI gives you structured access to agent data. List active agents, browse sessions, drill into individual turns, inspect side effects — all from your terminal with familiar, composable commands.

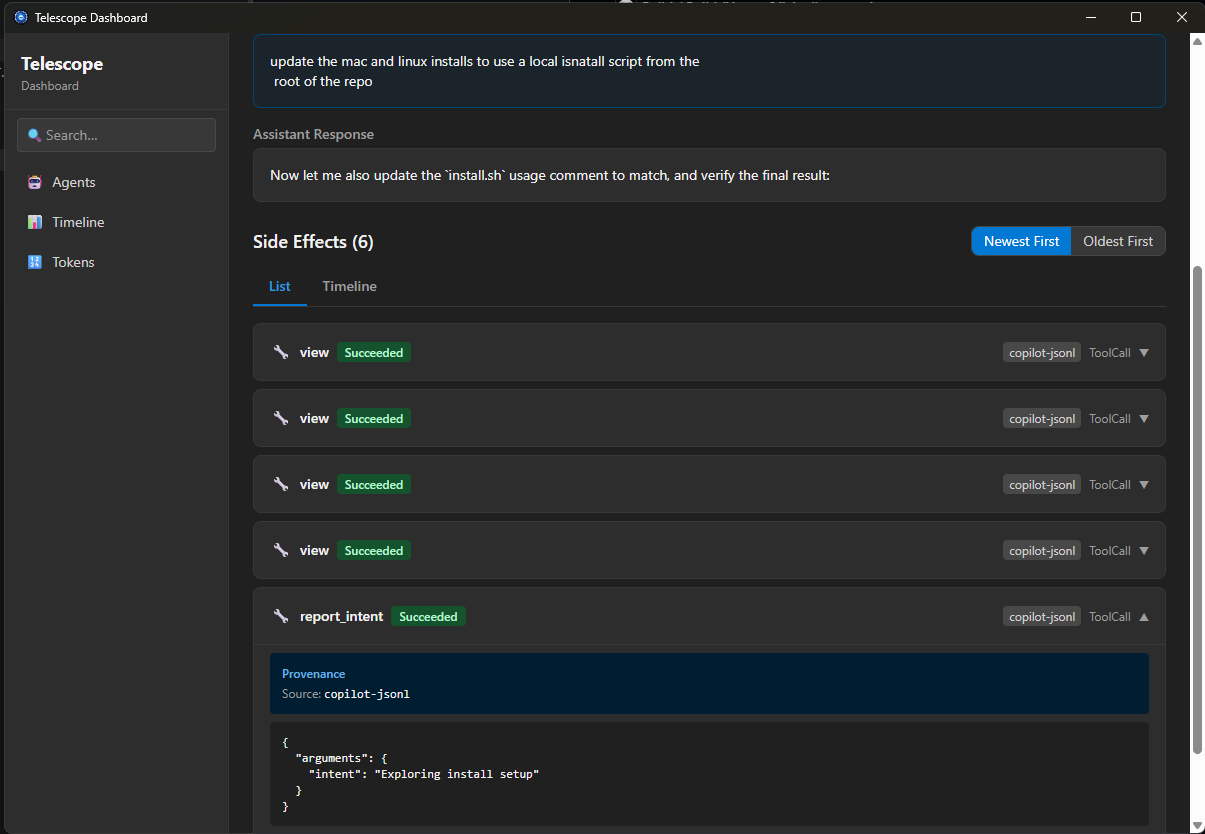

The dashboard is a desktop app that visualizes the same data with drill-down navigation: agents → sessions → turns → side effects. There’s a timeline view that shows spans, not just rows. You can trace exactly what happened in a session — which files were read, which tools were called, what was written — and in what order.

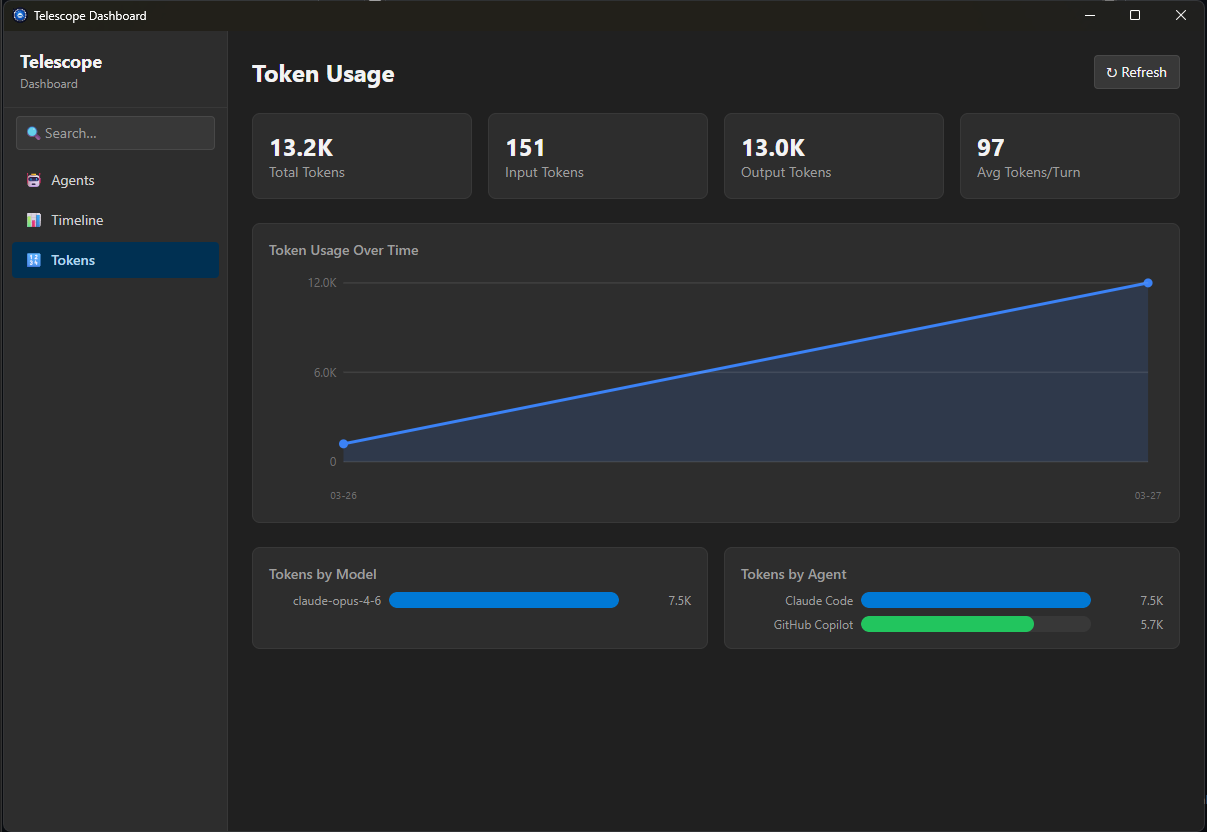

Token usage analytics are first-class. You can see cost breakdowns by model, by agent, over time. If you’ve ever wondered where your tokens are going, this answers that question immediately.

Every side effect an agent produces — file writes, shell commands, git operations — is captured and browsable. You can drill into any session and see precisely what changed, when, and which turn triggered it.

The MCP proxy is perhaps the most powerful feature. It sits between your AI agent and any MCP server, transparently capturing every tool call, resource read, and prompt — without changing how your agents work. One command instruments all your existing configs:

tele setup # instrument Copilot, Claude, Cursor, VS Code

tele setup --undo # restore original configsTelescope understands that not all agents work the same way. It normalizes behavior from different agents — GitHub Copilot, Claude Code, and others — into a unified view. You don’t need to learn different log formats or hunt through different directories. Everything is in one place, queryable, and structured.

Why Local-First

A reasonable question: why not build this as a cloud service? Aggregate data across teams, build dashboards at org scale, do cross-user analytics?

Because the data is too sensitive. Agent sessions contain your code, your prompts, your file contents, your git history. Sending that to a third-party server — even an internal one — changes the calculus of what you’re willing to let agents do. If you know your agent activity is being uploaded somewhere, you self-censor. You restrict agent access. You disable features. The observation changes the behavior.

By keeping everything local — SQLite databases in your home directory, no network egress — Telescope removes the privacy concern entirely. You get full observability without any surveillance. The agent can see everything, and you can see what the agent sees, and nobody else is watching.

The Deeper Point

We are in a peculiar moment in software development. For the first time, we routinely delegate meaningful engineering work to systems whose reasoning we cannot inspect. We trust them because they usually produce correct output, not because we understand their process. This is a new kind of trust — outcome-based rather than process-based.

Telescope doesn’t try to make agents transparent in the AI-interpretability sense. It doesn’t explain why an agent chose one approach over another. What it does is make the behavioral trace legible — the sequence of actions, decisions, tool calls, and side effects that constitute what the agent did. This is closer to how we understand other people: not by reading their neurons, but by watching their behavior and building a model.

You can’t debug what you can’t see. You can’t optimize what you can’t measure. You can’t trust what you can’t audit.

Your agents are doing more than you think. Now you can see it.

Project Telescope is an open-source preview at github.com/microsoft/project-telescope.